Jordan Bates • • 11 min read

10 Ways to Avoid Becoming Just Another Fool on the Internet

It’s well-known that humans suffer from confirmation bias—the tendency to interpret new information in ways that confirm preexisting beliefs.

We’re constantly looking for information supporting what we already believe, and we unconsciously reject virtually all information that conflicts with our current beliefs.

In a recent article, I explained that this is already a huge problem, but the Internet makes it much worse.

Why?! How?!

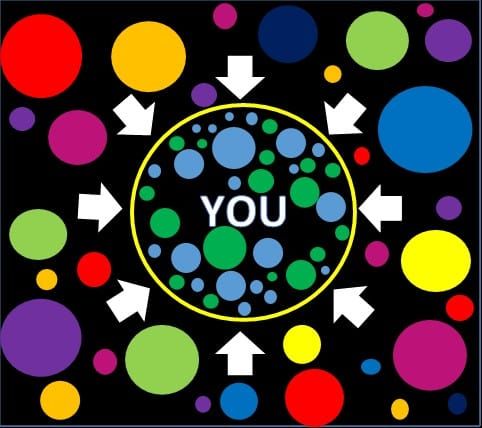

Well, here’s the gist of it: Based on what they know about you (which is a lot), Google, Facebook, YouTube, and other large websites show you content that they think you want to see—i.e. content that validates your current worldview—not content that will challenge your beliefs or make you smarter.

Furthermore, media outlets are almost always biased, and you gravitate to the ones that confirm your cherished beliefs. Lastly, the advertising-driven profit model of online publishing encourages news outlets to create clickbait content that spikes our emotions and confirms our worst fears about the world.

(Those are the barebones basics. If you want to understand the details, read the essay I wrote about this.)

These ingredients combine to create a decidedly toxic cocktail: an Internet machine that mostly just convinces people that what they already believe is Right, Good, and True.

And this is a problem. It leads to people feeling like they possess the Absolute Right Answers, and that everyone who disagrees with them is a misguided dupe, or worse. This leads to dangerous dogmatism and divisive tribalism—you know, the phenomena that have probably caused most of the violence in human history.

So the Internet is broken, and we need to do something about it. The web is not set up to help you admit your own uncertainty, attain more nuanced, accurate viewpoints, or understand those who disagree with you. It’s set up to make it really hard for you to do those things. It’s basically geared toward making you more dogmatic, tribal, and angry (because this increases profit), which, again, tends to be really bad for all of us.

How to Outsmart the Internet Overlords and Fix This Mess

I’m not sure it’s possible, to be honest.

At least on a large scale, it seems that most people are already p00ned, and that it’s going to be damn difficult to reach them.

On a micro, individual scale, though, there is hope. For you personally, there are reliable methods of avoiding becoming Just Another Fool on the Internet.

The first step was merely to familiarize yourself with the problem, so congratulations, you’re making progress.

The following is a list of additional steps to take, and it is mostly a list of ways to be a smart person, both online and in the meatspace. If you are interested in being more like a smart, rational person and less like a pawn in someone else’s game, read on.

1. Place a premium on evidence and strong arguments

“You should take the approach that you’re wrong. Your goal is to be less wrong.”

― Elon Musk

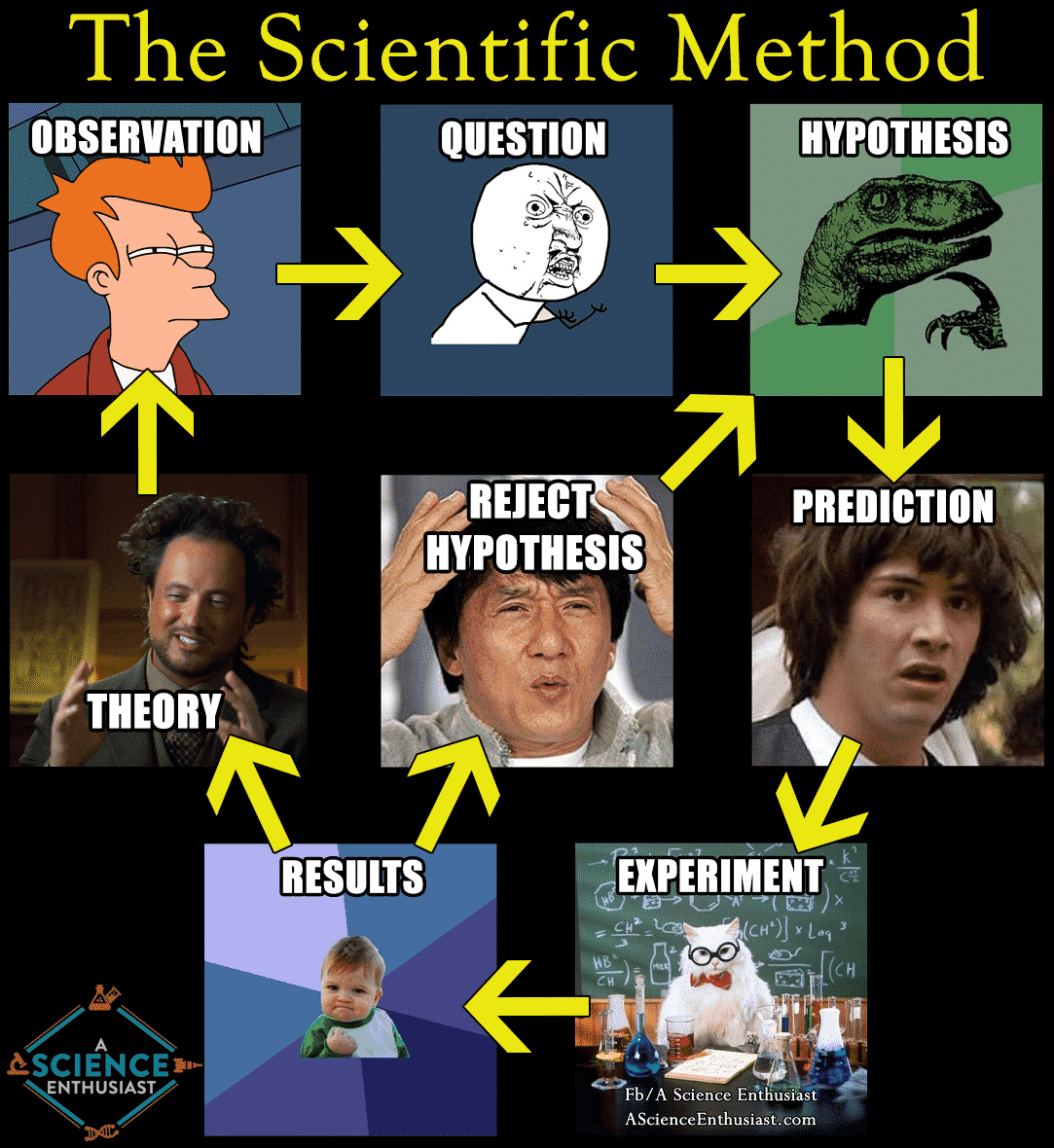

Science. Rationality. These are the essential building blocks of an ever more accurate and nuanced model of the world. Use them. Cherish them. Question all the things, especially your own viewpoints. Assume your worldview is incomplete, and that you are wrong about many things. Challenge your most sacred beliefs; find out if they are actually based on evidence and reason. (Re-)familiarize yourself with the scientific method, and aim to apply it in your own life. Learn to construct rational arguments. In this article on LessWrong, user aaronsw nicely summed up the approach of self-skepticism that characterizes all great scientists and rationalists:

“As Feynman wrote, ‘The first principle is that you must not fool yourself — and you are the easiest person to fool.’ Our beliefs always seem correct to us — after all, that’s why they’re our beliefs — so we have to work extra-hard to try to prove them wrong. This means constantly looking for ways to test them against reality and to think of reasons our tests might be insufficient.”

2. Believe nothing absolutely

“Belief is the death of intelligence. As soon as one believes a doctrine of any sort, or assumes certitude, one stops thinking about that aspect of existence.”

― Robert Anton Wilson

Embrace uncertainty. Again, assume that your current model of the world is in some ways wrong and incomplete and needs to be updated continuously for the rest of your life. You can still hold strong opinions, but make a point to retain enough intellectual humility to know that your models can be improved and to improve them as new information is presented to you. This approach will not only make you smarter and immune to dogma; it will also allow you to transcend limiting inherited assumptions in all areas of life and become a freer, happier person.

“When my information changes, I alter my conclusions. What do you do, sir?”

— John Maynard Keynes

3. Learn to identify reputable sources

This article is a good place to start. Here are some helpful questions it suggests one ask to determine if a given source is at least passably reputable:

- Who: Who is the author and what are his/her credentials in this topic?

- What: Is the material primary or secondary in nature?

- Where: Is the publisher or organization behind the source considered reputable? Does the website appear legitimate?

- When: Is the source current or does it cover the right time period for your topic?

- Why: Is the opinion or bias of the author apparent and can it be taken into account?

- How: Is the source written at the right level for your needs? Is the research well-documented?

4. Consult multiple sources with different ideological leanings

As mentioned above, virtually every source out there has some kind of bias or leaning. One way to attain a more balanced perspective on something is to read about it in multiple sources with different biases. For example, read about a given political issue in both The Atlantic (left-center bias) and The Wall Street Journal (right-center bias). Ideally compare what you read from different sources with the most neutral account of the facts that you can find (from somewhere like Wikipedia). This will help you to better understand media bias and result in your arriving at a more well-rounded perspective.

5. Steelman your opponents’ arguments

You may have heard the term “straw man,” which refers to a weakened form of an opponent’s argument that one contrives in order to make one’s opponent easier to refute. “Strawmanning” basically amounts to simplifying or distorting your opponent’s argument to make it easier to argue against them. It’s a common logical fallacy. “Steelmanning” is the opposite—a tactic employed by rationalists in which one brainstorms the strongest possible form of an opponent’s argument in order to be sure that one’s own view can actually stand up to the most intense scrutiny. You literally strengthen the arguments against your position as much as you can to see if you can still refute them.

6. Follow outgroup members and/or people outside your normal filter bubble

From Wikipedia:

“In sociology and social psychology, an ingroup is a social group to which a person psychologically identifies as being a member. By contrast, an outgroup is a social group with which an individual does not identify… It has been found that the psychological membership of social groups and categories is associated with a wide variety of phenomena.”

Your ingroups are the groups you identify with. Your outgroups are the groups with which you do not identify. So, if you’re a Democrat or progressive, try to follow some Republicans, conservatives, libertarians, and centrists. If you’re a religious person, try to follow people of other religions, atheists, and agnostics. And so on and so forth. The goal is to seek out viewpoint diversity.

Important: Don’t just follow anyone in an outgroup. Aim to follow smart, civil outgroup members with interesting arguments who are willing to have good-faith discussions. If you don’t do this, you run the risk of seeking out the most moronic, unlikeable outgroup members, simply to convince yourself of how nasty and wrong they actually are.

Here are a few people who are likely outside your ingroup/normal filter bubble that I recommend following on Twitter: Jonathan Haidt, Vinay Gupta, Sarah Perry, David Chapman, Jordan Peterson. To render your world model more accurate/nuanced, you must continuously engage the world models of smart, civil people who see differently.

“The surest way to corrupt a youth is to instruct him to hold in higher esteem those who think alike than those who think differently.”

— Friedrich Nietzsche

7. Join communities which value epistemic virtue

“Epistemic virtue” refers to openness to many ideas and viewpoints, indefatigable curiosity, a deep lust for the truth, the ability to change views based on evidence, the ability to identify biases and employ rationality, and a commitment to “utter honesty,” as Feynman put it. “Epistemic virtue” is fundamentally non-dogmatic; this is key. In a memorable passage in one of his essays, rationalist blogger Scott Alexander elaborates on “epistemic kindness,” one aspect of epistemic virtue:

“I don’t know how to fix the system, but I am pretty sure that one of the ingredients is kindness.

I think of kindness not only as the moral virtue of volunteering at a soup kitchen or even of living your life to help as many other people as possible, but also as an epistemic virtue. Epistemic kindness is kind of like humility. Kindness to ideas you disagree with. Kindness to positions you want to dismiss as crazy and dismiss with insults and mockery. Kindness that breaks you out of your own arrogance, makes you realize the truth is more important than your own glorification, especially when there’s a lot at stake.”

Examples of communities which value epistemic virtue: LessWrong, Slate Star Codex, Ribbonfarm, Meaningness, HighExistence, Refine The Mind.

8. Perpetually grey-pill yourself

We make a mistake if we assume that we ourselves are immune to dogma. Even if you consider yourself “open-minded,” you may still at some point be seduced into thinking you’ve arrived at the Final Answers about something. Thus, it’s imperative to forever challenge your own views, expose yourself to new ideas and information, and perpetually refine/expand your worldview. In an essay I consider essential reading, Venkatesh Rao referred to this process as “grey-pilling” oneself.

9. Subtly undermine excessive certainty

Plant seeds of doubt in the minds of those who are convinced of the correctness of their current world model. The way to do this is not to overtly argue with people or take on a patronizing, all-knowing attitude. Such tactics will provoke defensiveness in the other person and likely cause them to cling more tightly to their precious beliefs (see: the backfire effect).

In general, you’ll have more luck planting seeds of doubt by listening, being respectful/compassionate, and asking sincere questions: “Oh, interesting, can you explain in more detail how that would work?” or “Oh, I see where you’re coming from. What do you think of the idea that X and Y?”

If you can ask the right questions without seeming like a know-it-all, you have a chance of prompting people to examine their own viewpoints. Don’t focus on changing someone’s entire worldview on the spot; that rarely happens. Simply try to plant a seed of doubt that will gradually sprout into a full-fledged worldview-examination at a later time.

(Note: There’s something to be said here for choosing your battles. For instance, no one in the world is likely to benefit from you trying to convince your dear old Christian grandmother that her views are misguided. Such an attempt may, however, cause undue stress and ruin your relationship.)

10. Build a better Internet

As I’m sure you noticed in the intro, most of the reasons the Internet has become a Bias Confirmation Machine have to do with how we built the Internet. Google, Facebook, and YouTube were all coded by living, breathing human beings. The ad-based model of online publishing continues to pollute the online landscape because we haven’t replaced it with something better.

It’s reasonable to assume that the massive companies propagating these deficient technologies are well-aware of their toxic effects. To their credit, Facebook and Google have both recently committed to incorporating fact-checking services into their user interfaces, which may prove to be an effective innovation.

Nonetheless, many of the underlying issues are unlikely to be addressed, so long as online companies ultimately measure success purely in terms of the amount of time users spend on their websites and the number of times users click on things.

The advertising-driven profit model of online publishing encourages companies to value these metrics above all else. It seems we need to find a way to divorce the building of the web from the desire to get people to view/click on as many ads as possible, in order to generate an Internet that better serves humanity and aligns with our deepest values. This video is a perfect explanation of what I’m talking about, and this podcast episode is the best in-depth discussion of the problem I’ve ever found.

Fortunately, many smart people are aware of the current problems with the web and are working to fix them. One software engineer friend of mine is quite invested in building a better web and is working on curating the best content highlighting current issues, as well as the best current efforts being made to fix everything. I recommend following him on Twitter @ckhonson.

In Sum

Until the hypothetical future date when a more human-friendly, intelligence-enhancing Internet appears, we as Internet users must, unfortunately, be vigilant.

Recognize that whenever you get on the Internet, thousands of engineers have worked tirelessly behind the scenes to give you an experience that will make you want to keep coming back.

And it just so happens that people tend to keep coming back when, among other things, you give them a stream of endless signals that tell them their current beliefs are Right, Good, and True.

(There are a lot of other tricks to keep people coming back as well, but those will have to wait till a future article. If you want to accelerate your understanding, listen to this.)

Keep this in mind, and act accordingly. Believe nothing absolutely, and try to understand where others are coming from. Utilize the tips in this article to continuously refine your world model and to help others do the same.

If you do this, you will receive a great reward: the internal satisfaction of knowing that you endeavored sincerely to discover the truth, and that you sought to learn from, rather than despise, those who disagreed with you.

Click here to read Part One of this series!

Follow us on Facebook, everyone is welcome.

Follow Jordan Bates on Facebook and Twitter.

Throwing Rocks at the Google Bus: How Growth Became the Enemy of Prosperity by Douglas Rushkoff

If you want to continue thinking about the issue of technological development being disconnected from the service of our deep human values, I highly recommend this book.

“In this groundbreaking book, acclaimed media scholar and author Douglas Rushkoff tells us how to combine the best of human nature with the best of modern technology. Tying together disparate threads—big data, the rise of robots and AI, the increasing participation of algorithms in stock market trading, the gig economy, the collapse of the eurozone—Rushkoff provides a critical vocabulary for our economic moment and a nuanced portrait of humans and commerce at a critical crossroads.”

Jordan Bates

Jordan Bates is a lover of God, father, leadership coach, heart healer, writer, artist, and long-time co-creator of HighExistence. — www.jordanbates.life

![Seneca’s Groundless Fears: 11 Stoic Principles for Overcoming Panic [Video]](https://storage.ghost.io/c/16/46/1646cc7a-7d34-44f4-b502-3e54b8efdb50/content/images/size/w600/wp-content/uploads/2020/04/seneca.png)